Resources

mPower Software Services today announced it has been awarded a prime contract by the Federal Aviation Administration under the Electronic FAA Accelerated and Simplified Tasks (eFAST) contract vehicle. eFAST is a multi-year Master Ordering Agreement (MOA) program and is the FAA’s preferred acquisition vehicle for fulfilling Agency Small Business Goals to provide comprehensive management, engineering, and technical support services.

mPower Software Services today announced it has been awarded a subcontract by Lockheed Martin Corporation to assist in the development and implementation of the Time Based Flow Management (TBFM) program for the Federal Aviation Administration's Office of System Operations Programs.

A continuation contract with CSC on the U.S. Army LMP program has been awarded to mPower Software Services. mPower is looking for qualified candidates and can provide competitive salaries along with an excellent benefit package. Openings are avaialble for individuals with skills in the following areas; SAP technical developers (all disciplines), middleware applications administrators, SAP functional ERP specialists, training analysts, principal developers, Oracle DBAs, and quality assurance analysts.

Released this month, mPower Standardized is a complete desktop lifecycle management solution from mPower Software Services designed to resolve, simplify, and manage large-scale corporate desktop challenges. From multiple PC images and non-standard applications, to network device security and software compliance issues, there are many hurdles that enterprise-level businesses struggle to overcome. mPower Standardized provides a unique framework for simplifying desktop management and desktop standardization that can be implemented when tackling many enterprise IT initiatives such as Windows 7 migrations, technology refreshes, vendor or license audits, and application rationalization. In addition, through a partnership with BDNA of Mountain View, Ca, mPower Standardized delivers comprehensive IT visibility and data normalization tools used to discover and rationalize an IT environment faster and more accurately than any other available tools.

mPower Software Services of Newtown, PA and BDNA of Mountain View, Ca have announced a partnership between the two firms. mPower will be integrating BDNA's IT discovery and visibility solutions into many of their desktop solutions services. In return, BDNA has selected mPower as a service partner to provide BDNA clients with professional consultation, assessment, analysis and implementation using BDNA products. Windows 7 migrations, technology refreshes, and software license compliance audits represent some of the desktop solutions mPower will utilize BDNA products for.

mPower will be presenting along with BDNA and guest speaker Don Jones, MVP, Concentrated Tech on the topic Windows 7 migration and its impact on software licenses. Specifically, mPower will focus on application standardization, and highlight mPower Standardized, an approach to simplifying desktop management, and how the approach can pay big dividends when tackling a large-scale Windows 7 migration.

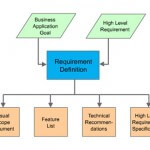

At mPower we follow a process oriented development methodology designed to minimize project risks and development time. We focus on business solutions that fulfill business goals, instead of merely providing technical solutions. All our applications are built on the basis of this philosophy. The approach that we adopt is the spiral iterative methodology, where the project goes through one or more iterations of all project stages. The above presentation gives a brief overview of the process.

Computer Sciences Corporation under contract with the Federal Aviation Administration is developing the modernization of the Traffic Flow Management System. The TFM System is a strategic air traffic control system that supports planning national air traffic. CSC is following a full software lifecycle development. The architecture is service oriented. The development work is using Java within a J2EE environment. mPower Software Services is providing software development activities include design, code and test, QA, Systems Administration and Technical Writing and Site Survey.

The Logistics Modernization Program (LMP) is the Army's core initiative to totally replace the two largest, most important warfighting support National-level logistics systems; the inventory management Commodity Command Standard System (CCSS), and the depot and arsenal operations Standard Depot System (SDS). LMP is a backbone for achieving Army Log Domain Strategic IT Plan and the Single Army Logistics Enterprise (SALE) vision. mPower Software Services provides development and programming support to CSC for portions of this imitative.

mPower is engaged by ABi to provide project management and programming and development support for the ABiCS system. ABiCS is a one of a kind solution in the ISP market place, providing consolidation of networks, implementation, support and billing possible through a one-stop shop methodology.